Raw file formats are not really well documented and therefore need some reverse engineering. Writing to a file can break it, no matter what part of file you write to…

Yes. A “read only” database… but well I probably do not have all the details on the topic.

Hopefully DxO will see our different needs and wishes and make the best out of it as usual.

Absolutely.

Use exiftool on your own risks (and keep your originals safe - even with LR).

Excellent remark, that raises a few questions and deserves a few comments. “Immediately” should actually be replaced with “as soon as possible”.

In Lightroom, the user can specify whether user edits should be written to the XMP file automatically (which doesn’t mean immediately) or only when the user decides to do so (Ctrl-S in LR). Saving changes automatically is safer in case of a program crash (but it’s not 100% safe because some write operations can be delayed, see below). But this mode can affect performance, especially for local edits. On the other hand, the user may forget to save the changes after an editing session. So LR saves to the database (catalog) anyway and allows the user to check whether the sidecar file and the database are in sync. If not, it also allows to decide which prevails.

The same ideas apply to DPL with one big difference : DOP files are not XML files. XML files can be written and read using generic tools that have been optimized and very often implemented at the development environment level (by the way, since these tools are natively available in the Microsoft .Net framework, I’m wondering why DPL uses a proprietary text format). XML files and DOP files are text files (the DOP format being one of those various - JSON like - curly bracket based text formats). So, writing to, searching in and reading from these files is a slow process in comparison with binary formats. Especially when creating masks for local edits. This is why writing to the sidecar files in real time may cause performance problems on low end systems or when applying numerous edits to the image. Therefore, it is sometimes necessary to delay the updating of the sidecar file. IMHO, using the XML format would be probably faster than using proprietary code for managing the DOP format. I’m unable to check this, though. However, I have noticed that the DOP files seem to systematically store all the settings even those who do not differ from the default values. This would be easier to manage with an XML format.

The performance problem can be partially solved by keeping the edits for the current image in memory while editing and saving to the database or to the sidecar file only when the user is idle. Now the question is : which is safer and faster ? Database or simple text (sidecar) file ? The database is safer because when an operation fails, there’s a fallback mechanism allowing to return to the previous state. With a mere text file, if there’s a failure, the whole data might be lost.

Which is faster ? This is hard to say because the edits are stored in the DPL database in exactly the same form as in the DOP file. The contents of the StoreSettings column in the Items table of the database is a mere copy of the DOP file (Lightroom is using exactly the same strategy but it uses the XML format instead). So, I can’t say whether updating a column of text type in the database is faster or slower than rewriting the whole DOP file. Granted, a text format for sidecar files is more practical for a lot of reasons. Using the same format for storing the settings in the database is more questionable. Of course, there are so many settings to record that using a specific column for each of these settings would be awkward to say the least. However, storing the settings as a binary blob that could be quickly loaded into an in-memory well-known structure without a need for re-interpretation (parsing, in the programming parlance) would be more effective for both reading and updating.

So, abandoning the database would only limit the possibilities to handle the above described problems more effectively.

PS : Recently, Lightroom moved all the presets that were stored in JSON/DOP-like format (.lrtemplate files) to the XML (XMP) format. There must be a reason.

I do the same thing in Lr and PL: I set the app to NOT automatically save sidecar files.

On the other hand, I do save sidecars, mostly for two reasons:

- Lr: transporting metadata and settings

- PL: establishing a baseline that I can always revert to

(historic reasons and still quicker than searching the history)

[OFF-TOPIC ON]

In LR, I have created a smart collection showing the images that have the “metadata changed” flag set. So, it’s very easy to update all affected XMP files : display the collection | Ctrl-A | Ctrl-S.

[OFF-TOPIC OFF]

that’s exactly my style of working. Which files have to be deleted? I’m on Mac.

Smart idea… Beware: your action (Ctrl-A | Ctrl-S) overwrites sidecars by the database’s content. You might miss some wanted metadata change coming from external apps that can write to .xmp if you ask them nicely…

Syncing sidecar content in several apps might be difficult, at least for the user doing it.

Is xml a good and simple cross compatible format for both platform ?

Remark: At work we use the .yaml file structure for configuration files. It’s more digest for the software engineer then the xml format.

Absolutely. That’s why it is so widely used. Any application on any platform can read any XML file and manipulate it. Understanding the contents depends on whether you have information about the XML vocabulary (set of tags) used by the XML file but very often, if correctly designed, this vocabulary can be easily understood by just reading the XML text. XMP is actually an XML vocabulary. There are a lot of XML vocabularies available (but you can create your own). Each of them specifies a set of tags and attributes for particular industry or business needs. For example, HTML is not an XML vocabulary but XHTML is. An XHTML file uses the HTML tags but has to comply with the XML rules. So the source of an XHTML page is more strictly structured and this makes the job of the browser easier.

XML describes the rules with which the tags and attributes used in the XML file must comply and the vocabulary defines the tags, the attributes and their meaning. Since all XML files are submitted to the same rules, they can all be loaded, read and manipulated with the same set of tools that are available on all platforms. That’s why a lot of application are able to read an XMP file, extract valuable from it or even add custom information to it.

Thanks for the detailed explanation.

Let’s see what will come…

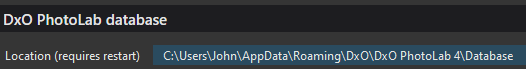

I’m not sure for Mac, but if it’s similar to the Win version then you can find its location listed in your Preferences settings.

John M

On Mac, the database is in the Library folder, which is normally hidden in your home folder. See the screenshot of this post for details:

Except DNG. I made a choice a long time ago to have my camera natively record DNG instead of the proprietary PEF files. So far this has only been a neutral or beneficial decision. One of the benefits is Lightroom can save keywords right into the file itself which is great for portability. No need for sidecars. And yes, PhotoLab reads those keywords just fine.

It’s worked out so well that a while back I grabbed Adobe’s DNG Converter and converted all my old PEF files, so now apart from early JPEGs my entire library is DNG.

A few years back i ran into the concept of DNG, did the reading and hangout on the Forums of geeks and pro’s to understand the use and impact on a choise.

1 it’s a “standard” but for how long? PDF is a standard but still massiffly spread in it’s characters and a swirm of trouble due the lose definitions of what’s a PDF.

2 It’s a container so a container is always “bigger” in size and that’s a thing when you have to store the original Rawfile ánd the DNG-file.

3 advantage is you can edit it and pass it through without loss(when it’s a dng to dng). (no lossy character like a tiff.)

4 Most DNG’s are linair (edit: if not all) and thus a converted rawfile with often a limited colorspace. (not this latest one that DxOPL offers)

5 It’s a handy futuresafe storage for when your rawfiles arn’t readable anymore by application’s (well i think the DNG-converter will be there if this happens)

6 possibility to write exif/iptc/anything in this container.

Probably more point’s but i forgot alot.

biggest drawback was filesize for me at the time and the well dodgy “standard” DNG is. not readable for all developerapplications or exported versions are colorshifting when fedd in other apps. (Rawfiles do also by the way due interpretation differences.)

in a stationary setting, no traveling of files and person, a rawfile works fine. extra xmp’s needed and it’s cluttering your viewscreen but that’s it.

The strenght of a good type of DNG is moving between applications without any loss after the conversion from raw. So as i earlier said in a company setup where image’s are used more then once or by more then one person or by more then one editing sequence it’s a powerfull time safer.

you create a preset for a type of file export this in DNG and you have a “basepoint” which can be used for all your needs.

conclusion: i tried this DNG-thing and got frustrated by the filesize grow , the colorshift when passing through rawdeveloper apps. (ok only two, DxOPL and Silkypix or ACR? (dngconverter kindoff)

And the “stress of choice which correction i do where”?

First lineair DNG of DXO was AdobeRGB limited so you edited exposure and WB and tone and contrast and DR (blackpoint whitepoint) so it fitted in the colorspace. And then you did this again in the second (the fiddling) because it looked different…

so the project was abandoned because it gave me no extra gain. (Edit: a Tiff is more stable in his color when passing around.)

BUT, now we have colorspace of the rawfile!!!

It’s a new day.

i stil have “choice stress” probably but now i can use this container to leave the good old 16b Tiff AdobeRGB when i want to multi app hopping. (shall NIKcollections be upgraded to a DxO DNG reader?)

panorama stitching, eh my camera does that, i don’t have a tool for it in manual form but this will help to balance WB differences if i would have such a app.

stacking, yeh! funn! incamera and splitting a 4K movie in jpegs to do it manual. not often but it’s fun to play with.

i now have a new application which can do more then focusstacking in “rawfile” modes. need to see how it really hold it’s trousers up in use but i see a use of DNG peeking around the corner.

Shoot burst in raw or timelaps or sequense or manual one every week or multiexposure, oh wait that’s allready one raw or not?  , run them through DxOPL export a DNG and you have the base set for stacking stitching cleaning up stills from walking people in a building without any transferlosses…

, run them through DxOPL export a DNG and you have the base set for stacking stitching cleaning up stills from walking people in a building without any transferlosses…

(still i don’t convert my hole archive in DNG… sorry )

Each to their own, especially as some native RAW formats are both widely supported and may confer some other benefits. But…

Since the decision to switch entirely to DNG I am not converting any new RAW file to DNG since I changed the setting on my camera and the camera is writing the data directly into the DNG file itself. If I am to trust anyone to do the most accurate job of that, it should be the camera manufacturer.

I, too, found the file size to be a problem when I first tried native DNG shooting. This was also manifested in a much slower time to write to the memory card which reduced the rate at which I could take pictures. However, a later camera model completely solved this problem. The DNG files produced in camera are very close in size to the native RAW files. In many cases with images from older cameras, the DNG files are smaller than the originals when converted by Adobe DNG Converter.

I do not completely understand the DNG concept but I believe it may only partially solve the compatibility problem as, for example, PhotoLab will not read all DNG files (it couldn’t even read the ones it produced with PL3). So it still requires that software support the format within the DNG. In this aspect, it would appear to be draw — support for native RAW or particular flavour of DNG is necessary.

But then to move on to the benefits of DNG… being able to accept metadata and many apps able to access and update that metadata… and I see a clear win for DNG on this aspect. I take a DNG out of camera, use LR to add keywords directly into the file, and then process the same file with Luminar, PhotoLab, ON1, etc, and they all pass those keywords out on export to JPEG. Probably they would all read sidecar files too, but if I want to move photos around (and I do reasonably often) then I can simply do that… move the photo… without needing to worry about a separate file which I might lose or may get lost in renames or accidentally duplicated or put in the wrong place. I have one file which is either present or not, with everything in it. Just like every other file type on my computer.

Straying a long way from the topic of the thread, but:

True, they’re not well documented by the camera makers. However they are very transparent and very well understood; they are all (or virtually all) built on the TIFF model. See this explanation by Bogdan Hrastnik on the ExifTool website for more.

Re DNGs, as mentioned in another thread, the format never really took off in the way that it was envisaged and personally I can’t see any good reason for using it (except as a better native alternative to JPEG on your mobile phone). If you do use it, at least learn the pros and cons.

Ugh. I had written up a diatribe but it was too much. So I’ll boil it down. If we choose our technologies on popularity and what might happen in the future rather than on what they give us today, then we’ll miss out on the benefits and still be surprised when the next unexpected change comes around the corner.

Oh, and DNG is based on TIFF, too.

Thanks for the summary and the reference to the MWG.

Horses for courses. I’ve always avoided DNG for the same reason, since it means that incremental backups see a change to a relatively large DNG instead of a small sidecar. Wouldn’t care if Lightroom (LR6 at least, the last version I used) gave me a choice, but it doesn’t.

I dislike databases that contain all editing information in one big blob even more, for the same reason (among others).

An interesting point I had not considered. Though it would not affect me much as I tend to import all of the files, then keyword them straight away. Given my backups are hourly, there is a chance some or all will be backed up twice, but that isn’t of much concern to me considering my cloud backup size is unlimited and I do not do a local backup because of the required size anyway.