Agreed. Some raw converters (e.g. darktable) perform 32-bit floating point calculations for precision.

Thank you, @sankos … I had not read that post (its title sounded too specific & not relevant to my needs).

There’s excellent info therein … definitely well worth reading.

John M

Even if there are not that many devices to display colors outside of AdobeRGB, there is no good reason, I could think of, to restrict the working color space to AdobeRGB. This means only less precision for color calculations inside PhotoLab and inside Affinity Photo using 16bit TIFFs exported from PL. I think, it is just a historically grown decission.

I couldn’t agree more.

At some point DxO might take this in consideration for a future major release…

Thank you, I will read it too.

I found some help about color management here ( en français ici ).

The author of this site is Arnaud Frich, french photographer.

I’ve tried Nik collection with Prophoto and there is a huge color shift, only converted to adobe rgb and srgb work, at least for me

I am not that informed in colorspaces but this i can grasp.

Is there a difference between calulation colorspace( the one where the raw information converted to “pixel rgb” is done) and viewing/editing colorspace setting? (the one you “see” on your screen/monitor?) Little reading: as fas as i understand the “frame” which you lay over the horseshoe of human visual colorspace is moving over the colorspace the rawconverter can handle.

So the clipping of colors is not fysical gone it is just not shown on the display/viewing device. So changing exposure value to avoid highlight clipping or shadow lifting to get more detail is actual bringing “colorvalues” from outside your chosen colorspace inside this “frame”.

Which brings up next question: the blinkies sun and moon: if i change display colorspace and i got blinkies (clippingwarning) in sRGB but not in AdobeRGB : you got false information if you export in sRGB. (the blinkies free preview is in export file not clipping free) or is it blinkies on the conversion colorspace?) in white and exposure correction the colorwarning light blue doesn’t changed in both colorspaces. also in color saturation warning it did’t show a difference) So it seems not to be linked to the viewing colorspace of the devicesetting.

you have:

- base(max) colorspace as in raw to pixel conversion, i believe now Adobe RGB.(a bigger one would be better for more precise colorcalculation in this conversion proces because you can’t make/export bigger colorspace then the conversion colorspace.)

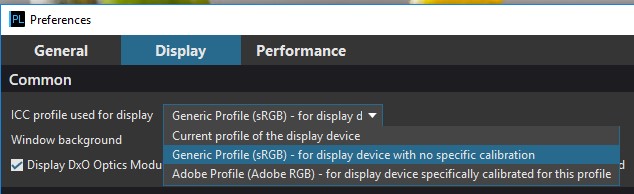

- setting of work colorspace ( original, sRGB, AdobeRGB,) the viewers workspace and where you edit the colors in. (this one we can’t set our selfs now.))wrong/ its in display preferences ICC profile used for display:

- setting of output workspace, original, sRGB, AdobeRGB/ custom (given when export a file with in it’s posibilities.)

i am not sure but i think it’s true when you shoot raw and setting in the menu of the camera to sRGB instead of AdobeRGB you only limit the Jpeg’s colorspace not the raw because that isn’t “color” yet. just data of lightness measured during exposure of the sensor by light.

So the setting in DXO as “Original” is only referring to the camera setting in the exif data by output not the actual workspace the rawconverter works.

One tiny problem seem to be in prophoto:

If you start the Nik collection plug-ins from within Affinity Photo the previews are not colour-managed. If you start them from Ps or Photoshop Elements or in the standalone mode they are fine working with ProPhoto 16-bit tiff images. It’s an often reported bug in Affinity Photo plug-in engine (it affects all the plug-ins I use, not just the Nik Collection). I reported it to them two years ago and it still hasn’t been fixed. Hopefully version 1.7 will address that issue.

After reading this, I think we mix up color space with bit depth a bit:

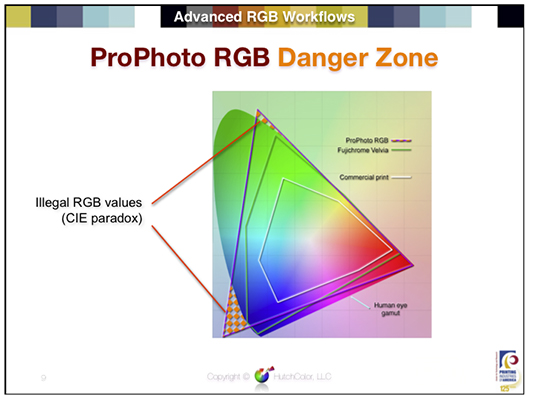

So if we have a given constant bit depth, and have an image, which only contains colors, which stay inside AdobeRGB range, there should be even less rounding errors, than if Prophoto RGB was used. The more colors are encoded in the same amount of bits, the less distance is available between two colors and the more likely is a rounding of these two colors to the same value in the output. Prophoto allows covering more colors of the visible spectrum, while AdobeRGB allows finer gradients and more precise calculation in the area where the two color spaces intersect.

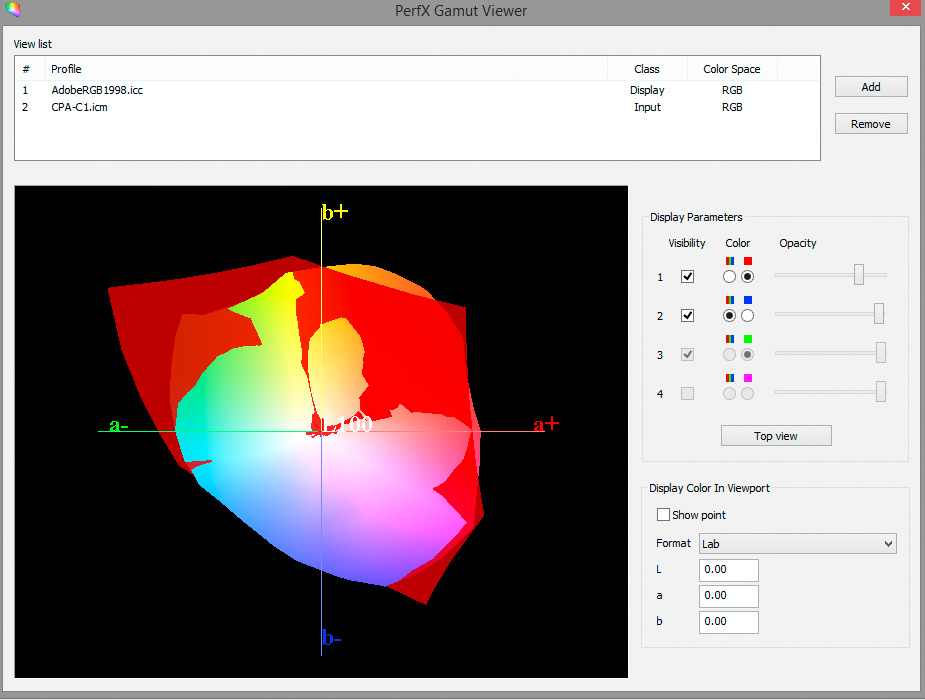

Here is a comparison of the Adobe RGB gamut with the gamut of my camera (Nikon Coolpix A).

As you can see Adobe RGB (the red plot) doesn’t completely encapsulate the gamut my camera is capable of. This is comparable to Bill Claff’s findings with respect to an older dSLR (Nikon D70). His conclusion is quite clear:

To preserve the widest range of colors I recommend using ProPhoto RGB as the working color space.

Rendering intent will take care of gamut-mapping for output device, but out-of-gamut warnings and soft-proofing can help in an optimal edit.

Please find attached a video Endre posted on another thread:

Best

Sigi

Thanks for that, using Nik from Luminar doesn’t give that color shift as AP does if using prophoto

The color work space is in my eye’s only fixed after exporting out the rawconverter.

in jpeg tiff or dng.

before that it’s changeable because it’s converting in a cameracolorspace which is cut /clipped by the selected colorspace in the application, which you can change in some apps.

So i get the Adobe RGB camerasetting to get some extra “room” processing the oocjpeg.

Maybe i change camera settingto AdobeRGB.

The question i have is is PL’s AdobeRGB cut out of the camera’s one and is the outer section(colordata which falls outside theAdobeRGB) “thrown” away? And not recoverable when fiddling EV and hue sat and contrast. Because if so then is prophotoRGB as base workspace indeed a much better choise. Because if data is lost by rawconversion in smaller colorspaces the clipped data gone if you use a small colorspace.

stil iam convinced with noncalibrated sRGB screen i don’t see any difference until i print something.

This is NOT my area of expertise, so I cannot say for sure - - but, I would say, logically speaking, that this would have to be the case … because AdobeRGB is smaller than the camera colorspace.

This is probably only an issue for “future proofing” tho (were there to be future technological advances), as we’re not likely to be able to see any difference in colour detail on our screens (according to my understanding).

Regards, John M

So one reason more to keep your rawfiles and developinfo in archive.

If you need wider gamut, bigger colorspace in the future it’s rather easy to redo those by “re-edit” and just adjust the limitations more free. export again. Done.

This can’t be done if you archive Tiffs or lineair DNG. That is demoniac’d and contains a colorspace of that time.

That 3D visualizing colorspace “trick” is a very handy way to see how to fit the most of a cameradata (image) in the available colorspace aka clipped colors.

Is that a expensive feature?

This is a question best answered by people like @wolf because I can only speculate. If the camera colour space got mapped just once to the working space at the beginning of the imaging pipeline (without some further re-mapping), and if the relative colorimetric rendering was used for the mapping, then PhotoLab would re-map the out-of-AdobeRGB colours so that in effect there were no colour subtleties preserved for those initially out-of-gamut colours.

Well I can see subtle differences for some colours outside of Adobe RGB on my pretty old wide gamut monitor (esp.in the yellow/red range).

I did this colour management test with PhotoLab2 and it appears the program uses the Perceptual rendering intent (at least for jpegs). Additionally I learnt that the thumbnails in PhotoLibrary and in the image browser are not colour-managed in the sense that the embedded profile is not read by PhotoLab.

Thank you sankos,

Thankyou for confirming / clarifying how PhotoLab 2 handles our colour profiles …

… but thankyou also for providing those test images for us (from the link that you kindly provided), so that we can see how well (or how badly!) our other programs and browsers are handling the ICCv2 profiles (while taking account of the few limitations mentioned in your text on that linked page).

Colin P.

You’re welcome, Colin. Glad you’ve found it useful.

Hi, agreed of course !

When you talk about ProPhoto or Melissa, you talk about the gamut of course (which is the same for both), not their TRC. A workspace must be linear. Moreover, these spaces are not the largest available …